The Need for Neuromorphic Computing

Neuromorphic computers are energy-efficient computers that are designed to mimic the human brain. Neuromorphic computers have the potential to dramatically improve computing in environments where size, weight, and power (SWaP) are constrained. However, neuromorphic computing is still in its infancy. The process of inventing, designing, and optimizing neuromorphic computers is labor-intensive and requires a highly-skilled workforce. In order to accelerate the development of neuromorphic computers, we created an artificial intelligence (AI)-enhanced codesign tool called Modular And Multi-level MAchine Learning (MAMMAL). MAMMAL is capable of automatically designing simple, dendrite-like signal processing circuits and providing specifications for novel devices.

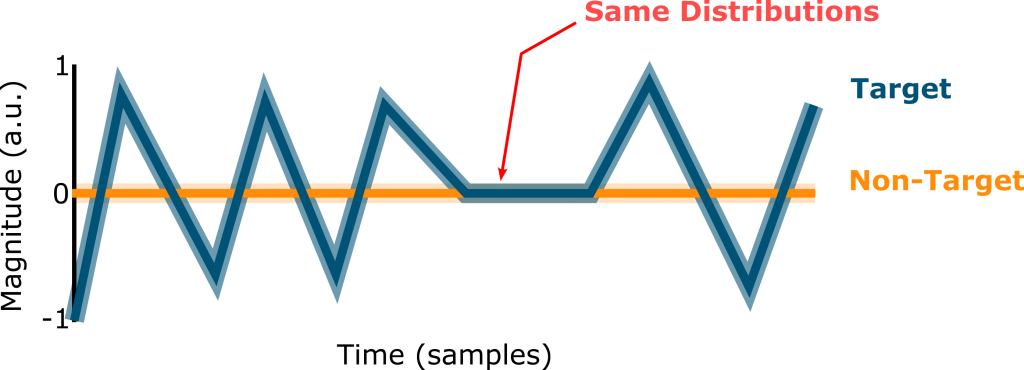

Consider the problem of signal detection in a SWaP-constrained environment.

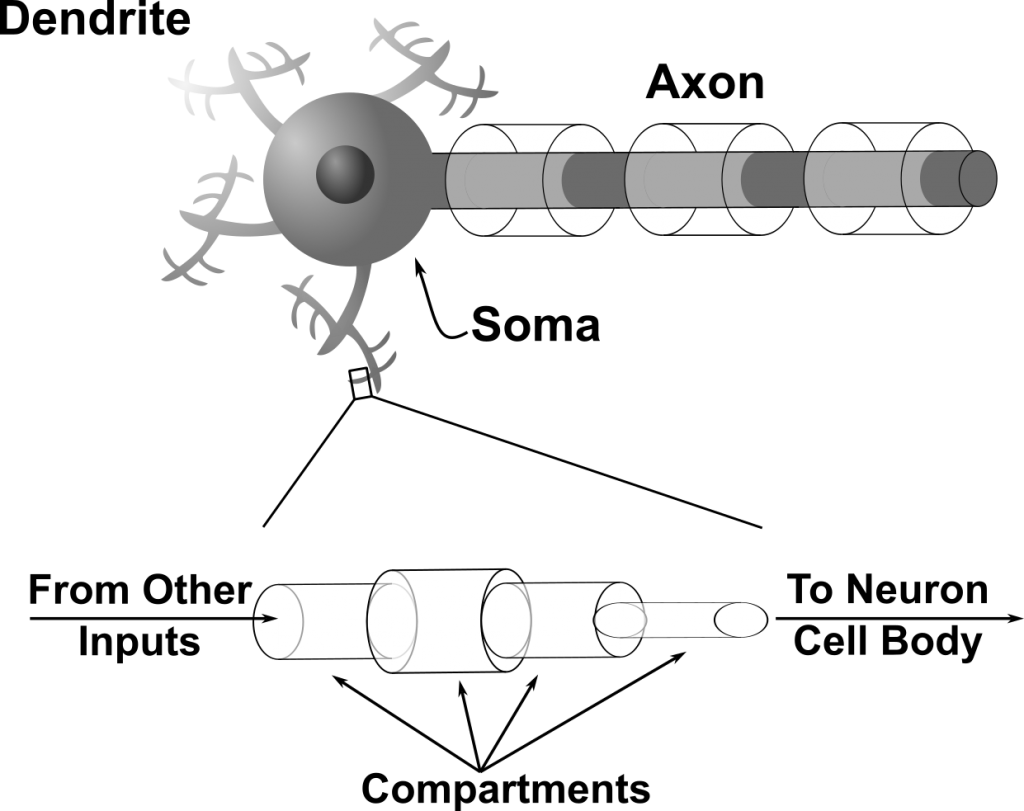

Biology solves this problem by using tiny, power-efficient circuits called dendrites, which are the inputs to neurons. These dendrites can be decomposed into separate sections called compartments. By changing the properties of compartments, such as their volumes, neurons can make themselves more sensitive to certain inputs and less sensitive to other inputs. In doing so, neurons can learn to discriminate between spatiotemporal patterns.

AI-Enhanced Neuromorphic Computer Design

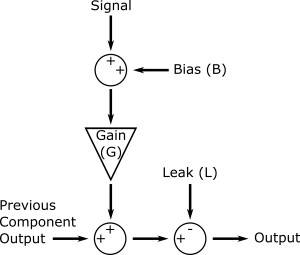

Learning from biology, we created a very simple, abstracted model of dendritic compartments, shown on the right. These models included 3 parameters: a bias (B), a gain (G), and a leak (L). We then showed that we could use AI to arrange these dendritic components into long chains and tune their B, G, and L parameters to allow these components to perform signal discrimination.

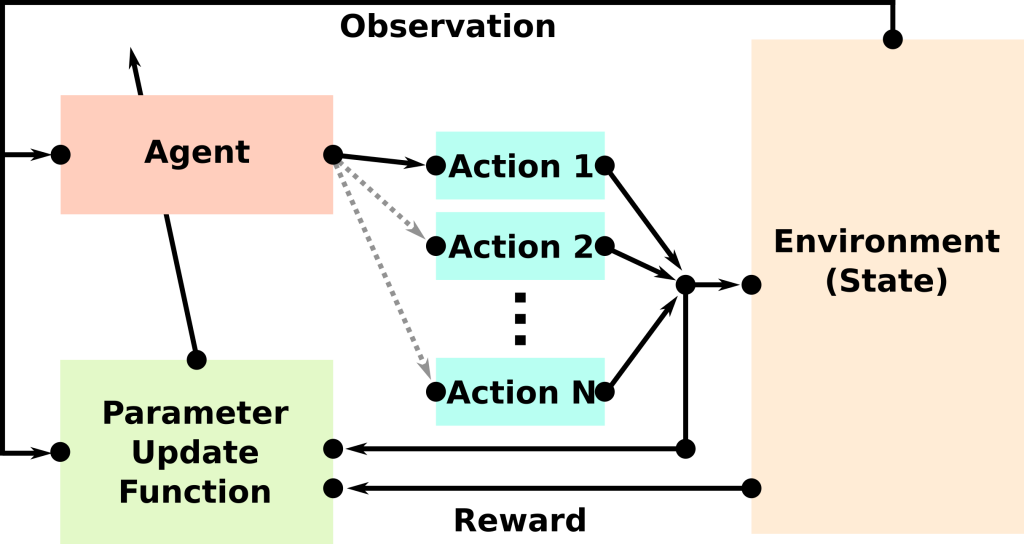

The specific type of AI that we used is called reinforcement learning. An overview of reinforcement learning is shown on the right. Reinforcement learning was originally developed to allow computers to learn in the same way that animals learn – by maximizing rewards. In the reinforcement learning paradigm, our circuit design tool (called an “agent”) chooses actions (the B, G, and L parameters) which get applied to the circuit (part of the environment, in the figure). In order to choose good actions, the agent observes the signal that it needs to detect. The agent learns by being rewarded for detecting the target signal, and by being penalized for detecting the non-target signal.

Reinforcement learning for automating the design of neuromorphic circuits worked remarkably well. For signals that contained 10-20 samples, the signal discrimination accuracy was 97%. To push the limits of what our reinforcement learning agent could do, we tested its ability to discriminate between signals that had up to 100 samples, even though it was only trained on signals that had between 10 and 20 samples. Even on this difficult test, the reinforcement learning agent was able to discriminate between signals with above 90% accuracy.

From Design to Discovery

As it turns out, reinforcement learning is not only useful for design but also a powerful tool for discovery. In the previous section, we demonstrated how reinforcement learning could be used to automate the design of neuromorphic circuits while using human-designed components (in that case, abstracted models of biological dendrites). But, what if humans had no idea how to solve a given problem? What if we didn’t know what components to use? Could AI invent components for us. As it turns out, the answer is, “Yes!”

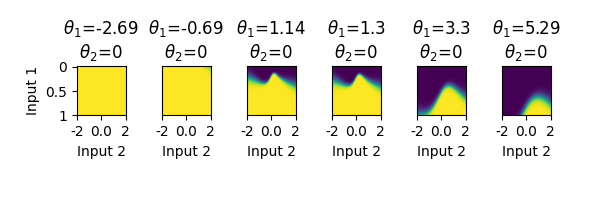

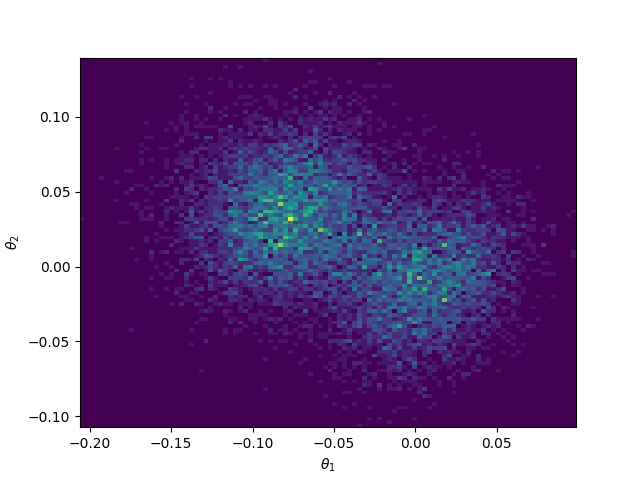

We repeated the same signal discrimination task that we described, above, but this time, instead of giving the AI a component that it should use, we asked the AI to invent its own component. As shown on the right, the AI was able to invent a novel component, controlled by 2 parameters that we called θ1 and θ2. By changing the values of the θ’s, it was possible to change the behavior of the component (top panel). The reinforcement learning agent was able to learn to tune these 2 parameters. As shown in the bottom panel, there were 2 clusters of θ1 and θ2 parameters. These parameters corresponded to the 2 different signal distributions (target and non-target signals).

What’s Next?

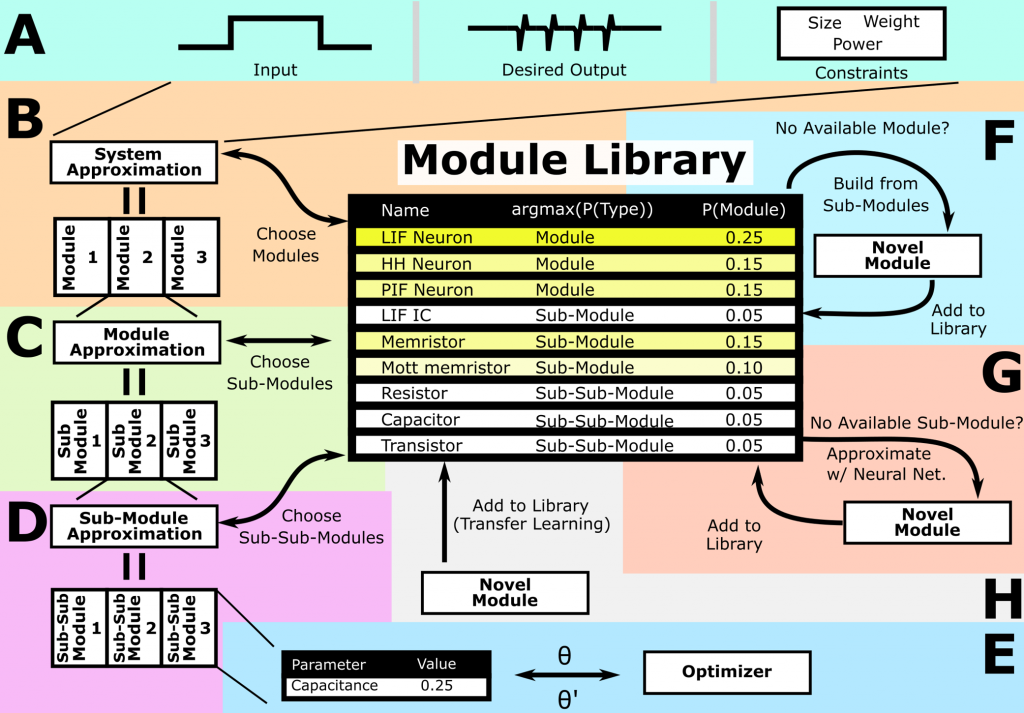

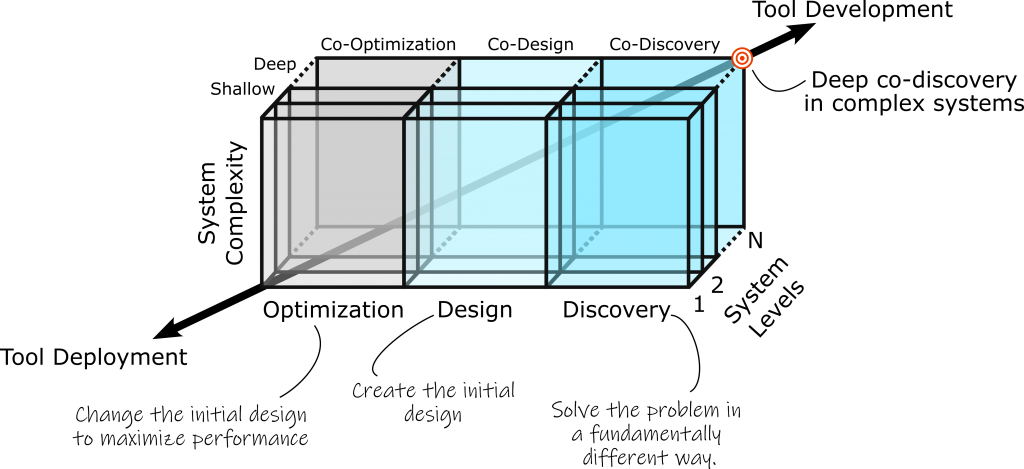

Our initial successes in designing and discovering new paradigms for neuromorphic computing are just the beginning. We are continuing to develop our system design tool, called Modular And Multi-level MAchine Learning (MAMMAL), which is shown in the figure above. For MAMMAL, an engineer provides specifications and constraints for the system (panel A). MAMMAL then constructs the system by choosing high-level modules from a library (panel B) and then refining the high-level modules by choosing lower level modules (panels C & D) as well as module parameters (panel E). MAMMAL can also create new high-level modules by combining lower-level modules (panel F) or by creating specifications for novel modules (panel G), as described above in our novel component discovery experiments. Lastly, MAMMAL can learn to use existing modules via transfer learning (panel H).

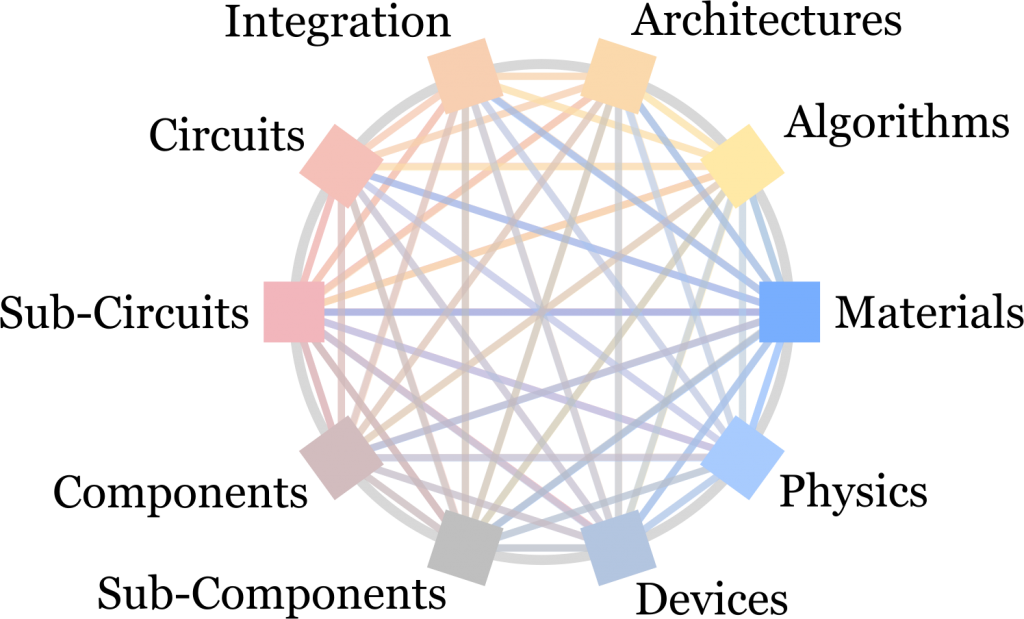

One of the most important features of MAMMAL is that it can be used to perform codesign – simultaneous design of multiple levels of a system, from materials to algorithms. It is believed that codesign will help us avoid local optima within the design space that are caused by sequential development of hardware and software.

Another defining characteristic of MAMMAL is that it allows us to move beyond optimization and design into discovery – the creation of fundamentally different designs. By enabling discovery, we hope that MAMMAL will dramatically accelerate innovation in electronics and beyond.

How Can I Learn More?

Our work has been published several times:

Crowder, D. C., Smith, J. D., & Cardwell, S. G. (2023, August). Deep Reinforcement Learning Methods for Discovering Novel Neuromorphic Devices. In Proceedings of the 2023 International Conference on Neuromorphic Systems (pp. 1-8). Paper

Crowder, D. C., Smith, J. D., & Cardwell, S. G. (2023, August). “AI-Enhanced Codesign of Neuromorphic Circuits,” 2023 IEEE 66th International Midwest Symposium on Circuits and Systems (MWSCAS), Phoenix, Arizona, 2023.

Crowder, D. C., Smith, J. D., & Cardwell, S. G. “AI-enhanced Codesign for Next-Generation Neuromorphic Circuits and Systems.” United States. https://doi.org/10.2172/1889339. Paper